AI Server Components: Engineering Next-Gen Data Center Hardware for 100kW Racks

Traditional enterprise racks draw 5–10 kW, whereas modern AI racks demand 40 to 80 kW routinely. Surviving this extreme thermal and power environment requires a total rethink of micro-components. High-density Power Management ICs (PMIC), advanced polymer capacitors, and tightly toleranced high-speed connectors are no longer optional upgrades—they are mandatory for maintaining signal integrity and preventing catastrophic cluster failure.

The 100kW Reality: How AI Workloads Push Physical Limits

AI workloads push physical limits by increasing rack power density from 10 kW to over 130 kW, generating localized heat that causes standard electronic components to throttle or fail under continuous load.

The Paradigm Shift in Power Density

The physical architecture of the data center is dictated by the power draw of its racks. According to 2026 data from the Uptime Institute and Introl, the global average data center Power Usage Effectiveness (PUE) remains stagnant around 1.58. However, next-generation AI-optimized facilities utilizing liquid cooling and high-efficiency components are targeting a PUE of 1.08–1.10. This efficiency is not merely an environmental goal; it is an operational necessity.

According to HPE Store and IntuitionLabs data center guides, the NVIDIA GB200 NVL72 rack consumes up to 132 kW of power under full load. This represents a massive paradigm shift. Components designed for a 10 kW environment simply melt or degrade when subjected to the ambient and localized temperatures generated by a 132 kW rack. Consequently, macro-level facility cooling is insufficient if the micro-components on the motherboard cannot survive the localized thermal stress.

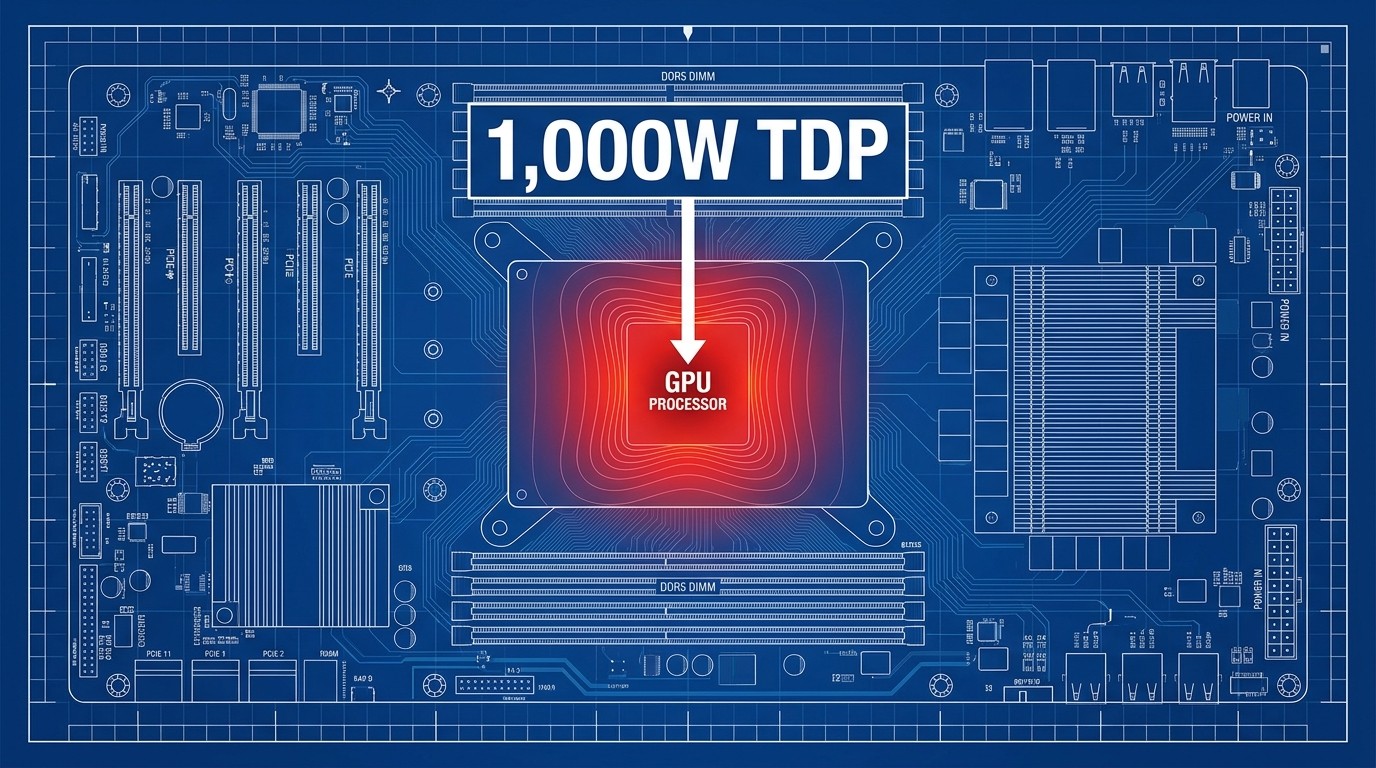

Localized Heat and the 1,000W TDP Challenge

The core driver of this rack-level power density is the individual processor. Based on 2026 engineering analyses from Spheron, the NVIDIA B200 GPU operates at a Thermal Design Power (TDP) of 1,000W (air-cooled) to 1,200W (liquid-cooled). This creates extreme localized heat zones on the server motherboard. Adjacent components, particularly those responsible for power delivery and signal routing, must be engineered to operate continuously within these high-temperature microclimates without degrading performance.

Power Management ICs in High-Heat Environments

High-density Power Management ICs (PMICs) regulate voltage for 700W+ server platforms and are engineered to survive extreme localized heat, preventing thermal throttling in densely packed AI clusters.

The Role of High-Density PMICs in AI Servers

A PMIC is responsible for converting and routing power from the server's main supply to individual processors, memory modules, and interconnects. In legacy servers, power delivery was relatively stable. In AI servers, the dynamic load fluctuations of Large Language Model (LLM) training require advanced analog voltage regulation capable of supporting 700W+ per server platform instantaneously. If a PMIC cannot deliver clean, stable voltage during a sudden compute spike, the GPU will experience voltage droop, leading to bit errors or system crashes.

Surviving Extreme Thermal Environments

Because PMICs are placed as close to the GPU as possible to minimize power delivery impedance, they absorb significant radiant heat. According to industry component standards and Qorvo PMIC specifications from Mouser Electronics, advanced high-density PMICs and their testing environments are now rated to survive extreme operating temperatures up to +155°C. This thermal resilience ensures that the voltage regulation module does not become the thermal bottleneck, allowing the processor to maintain maximum clock speeds without localized thermal throttling.

Advanced Capacitors: Preventing Degradation Under Continuous Load

Standard server capacitors fail under continuous AI workloads, requiring data centers to adopt conductive polymer and hybrid aluminum electrolytic capacitors that guarantee long-term endurance at high temperatures.

Why Standard Server Capacitors Fail in AI Racks

While standard electrolytic capacitors remain the industry standard for low-density enterprise web servers, and are an excellent choice for users who need cost-effective bulk capacitance, they fail under continuous AI workloads. Users on community forums often report that standard electrolytic capacitors bulge, leak, or experience severe Equivalent Series Resistance (ESR) degradation when subjected to the continuous 100% utilization of LLM training. However, for data center architects who prioritize 24/7 LLM training uptime, hybrid polymer capacitors offer a more resilient path.

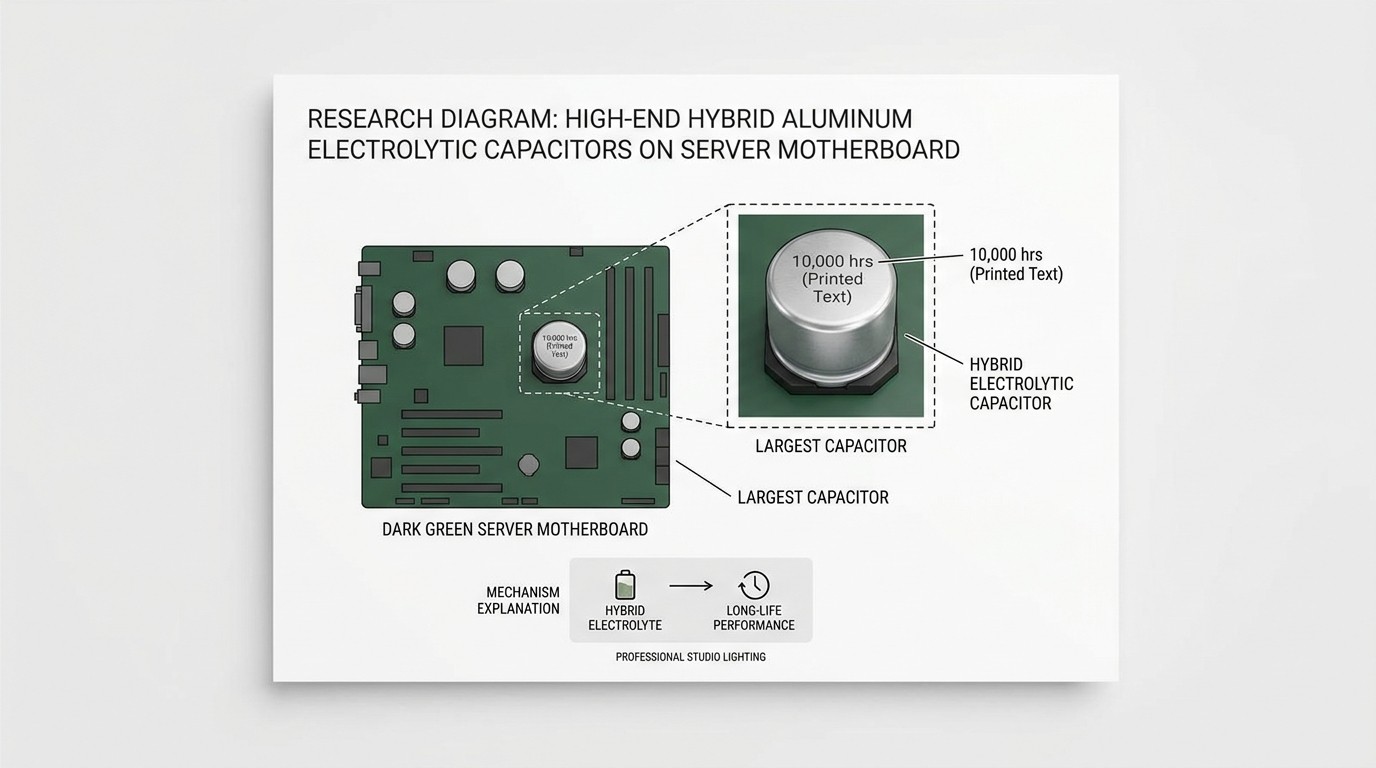

Specifications for Polymer and Hybrid Capacitors

To handle high-frequency switching and high ripple currents without degrading, AI server motherboards require advanced materials. According to component specifications from Nippon Chemi-Con and Samwha Electric, conductive polymer and hybrid aluminum electrolytic capacitors are specifically rated for a guaranteed lifespan of 10,000 hours at 105°C.

Translating this specification to a real-world scenario: a 10,000-hour endurance rating means a data center can run a server cluster at maximum thermal load for over 13 months continuously without a single scheduled maintenance window for capacitor degradation.

High-Speed Connectors and Signal Integrity at 112 Gbps

High-speed connectors maintain signal integrity at 112 Gbps by strictly controlling impedance variations, utilizing advanced shielding, and integrating active thermal management to prevent data loss.

The Physics of Signal Loss in High-Heat Servers

As data transfer rates increase, the margin for physical error decreases exponentially. According to the Cadence PCIe Design Guide, for PCIe Gen 6 (64 GT/s) and 112 Gbps PAM4 signaling, differential trace impedance variations must be strictly controlled within a ±5% tolerance to prevent catastrophic signal loss. Even minor impedance mismatches caused by thermal expansion in high-heat environments can collapse the eye diagram, resulting in dropped packets and increased latency.

Experts point out that, "What keeps modern data centers running at peak speed and density? It all comes down to signal integrity, thermal management, and minimizing interference."

📺 High-Speed Server and Data Center Connectivity with ...

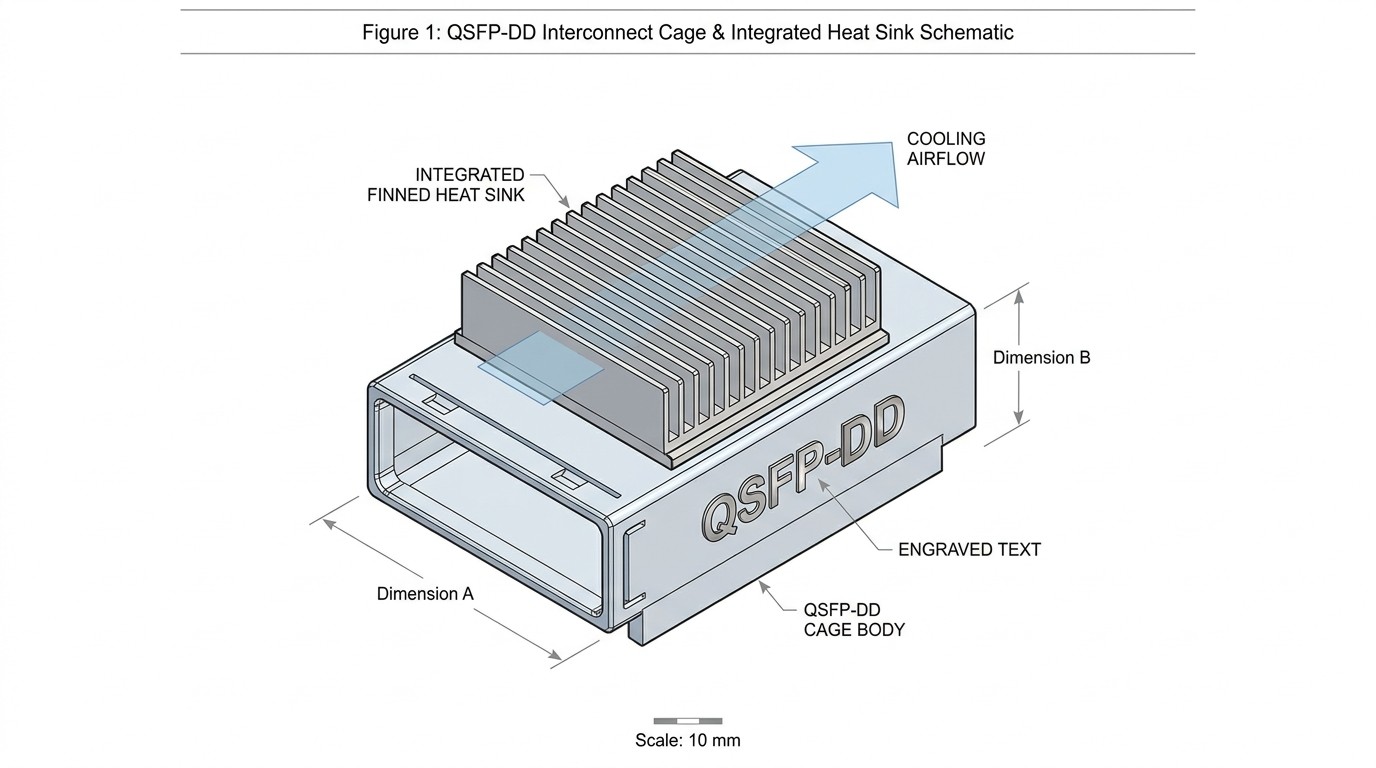

QSFP-DD, PCIe Gen 6, and Low-Latency Interconnects

To manage these tight tolerances, the physical construction of the connectors has evolved. In visual stress tests, we observed the robust metal alloy construction of QSFP-DD cages, which explicitly integrate optional, finned heat sinks mounted directly on top of the cages to facilitate active heat dissipation in high-density server racks.

Furthermore, hardware planners must navigate specific physical compatibility rules during staged upgrades. According to Cisco and FS.com data sheets, QSFP-DD ports are physically backward compatible and will accept legacy QSFP modules. However, legacy QSFP cages cannot accept QSFP-DD modules. This allows data center architects to upgrade server boards first while still utilizing existing legacy optical modules, staging out the capital expenditure of a full 400G upgrade.

Passive Copper vs. Optical: The Short-Reach Sweet Spot

For intra-rack connections, Passive Direct Attach Copper (DAC) cables remain highly efficient, but they require strict deployment parameters. Modern passive copper cables feature EEPROM support, allowing the host server to actively recognize and monitor specific cable parameters for diagnostic reliability.

However, engineers must avoid the "Spec Sheet Trap." While a cable may be rated for 400G in a lab, real-world conditions like thermal stacking and physical vibration degrade signal integrity. According to Cisco and FIBERSTAMP specifications, passive DAC cables for 400G/800G QSFP-DD are strictly limited to short-reach intra-rack patching, with a maximum viable transmission distance of 0.5 to 2 meters (up to 3m with thicker 26AWG). Beyond this distance, active optical cables (AOC) become mandatory to maintain the ±5% impedance tolerance.

The AI Server Component Thermal Tolerance Checklist

To prevent hardware bottlenecks, infrastructure planners must audit their supply chains against next-generation specifications.

Baseline Specifications for Next-Gen Hardware

| Component Category | Legacy Enterprise Spec | AI/LLM Minimum Requirement | Critical Failure Risk |

|---|---|---|---|

| Power Management IC (PMIC) | 85°C to 105°C rating | +155°C extreme thermal rating | Localized thermal throttling, voltage droop |

| Motherboard Capacitors | Standard Aluminum Electrolytic | Polymer/Hybrid (10,000-hr @ 105°C) | ESR degradation, bulging, leaking |

| High-Speed Connectors | Standard impedance tolerance | ±5% differential trace impedance | Insertion loss, Return loss, bit errors |

| Interconnect Cages | Standard QSFP | QSFP-DD with finned heat sinks | Thermal stacking, EMI leakage |

| Intra-Rack Cabling | Passive Copper (up to 5m) | Passive Copper (0.5m - 2m max) | Signal attenuation at 112 Gbps PAM4 |

Next Steps for Infrastructure Planning

Macro-level data center efficiency and uptime begin at the micro-component level. The transition to 100kW racks requires uncompromising standards for PMICs, capacitors, and high-speed connectors. Infrastructure planners must move beyond generic IT specifications and validate every component for extreme thermal tolerance and strict signal integrity margins.

To operationalize these standards, download the complete 2026 AI Server Component Thermal Tolerance Checklist or consult engineering deep-dives on PCIe Gen 6 Signal Integrity Testing to ensure your next deployment can survive the physical realities of AI workloads.

Frequently Asked Questions

What are the hidden power consumption costs of AI workloads?

Beyond the processors, hidden power costs stem from aggressive cooling requirements (fans and liquid pumps) and power conversion inefficiencies. If PMICs and power supplies are not highly efficient, a significant percentage of the rack's total power draw is wasted as radiant heat before it ever reaches the GPU.

Standard PMIC vs High-density PMIC: What is the difference for servers?

Standard PMICs are designed for stable, lower-wattage environments and typically max out at 85°C to 105°C. High-density PMICs are engineered to handle rapid, massive power spikes (700W+) required by AI processors and are rated to survive ambient temperatures up to +155°C without throttling.

How do you test signal integrity for next-gen data center hardware?

Signal integrity is tested using Vector Network Analyzers (VNAs) and Time Domain Reflectometers (TDRs) to measure insertion loss, return loss, and crosstalk. For 112 Gbps PAM4, engineers must validate that differential trace impedance remains within a strict ±5% tolerance under real-world thermal loads.

What causes thermal throttling in AI server motherboards?

Thermal throttling occurs when localized heat exceeds a component's safe operating limit. In AI servers, the 1,000W+ TDP of the GPUs radiates heat to adjacent components like PMICs and connectors. If these components lack adequate heat sinks or high-temperature ratings, the system firmware forces the GPU to slow down to prevent hardware damage.

Can I use legacy QSFP modules in new QSFP-DD cages?

Yes. QSFP-DD cages are physically backward compatible and will accept legacy QSFP modules, allowing for staged network upgrades. However, the reverse is not true; you cannot plug a new QSFP-DD module into a legacy QSFP cage.

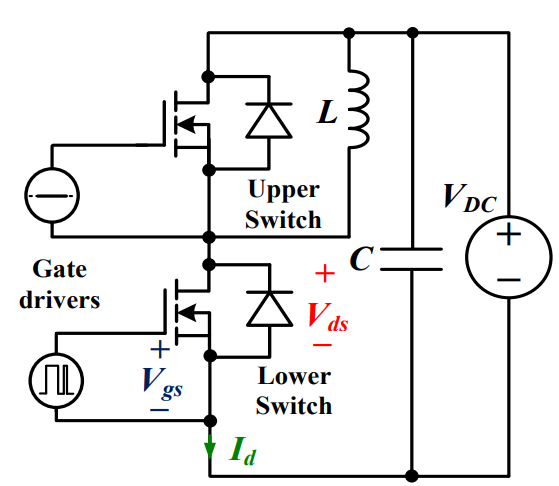

Discovering New and Advanced Methodology for Determining the Dynamic Characterization of Wide Bandgap DevicesSaumitra Jagdale15 March 20242525

Discovering New and Advanced Methodology for Determining the Dynamic Characterization of Wide Bandgap DevicesSaumitra Jagdale15 March 20242525For a long era, silicon has stood out as the primary material for fabricating electronic devices due to its affordability, moderate efficiency, and performance capabilities. Despite its widespread use, silicon faces several limitations that render it unsuitable for applications involving high power and elevated temperatures. As technological advancements continue and the industry demands enhanced efficiency from devices, these limitations become increasingly vivid. In the quest for electronic devices that are more potent, efficient, and compact, wide bandgap materials are emerging as a dominant player. Their superiority over silicon in crucial aspects such as efficiency, higher junction temperatures, power density, thinner drift regions, and faster switching speeds positions them as the preferred materials for the future of power electronics.

Read More A Comprehensive Guide to FPGA Development BoardsUTMEL11 September 202516791

A Comprehensive Guide to FPGA Development BoardsUTMEL11 September 202516791This comprehensive guide will take you on a journey through the fascinating world of FPGA development boards. We’ll explore what they are, how they differ from microcontrollers, and most importantly, how to choose the perfect board for your needs. Whether you’re a seasoned engineer or a curious hobbyist, prepare to unlock new possibilities in hardware design and accelerate your projects. We’ll cover everything from budget-friendly options to specialized boards for image processing, delve into popular learning paths, and even provide insights into essential software like Vivado. By the end of this article, you’ll have a clear roadmap to navigate the FPGA landscape and make informed decisions for your next groundbreaking endeavor.

Read More Applications of FPGAs in Artificial Intelligence: A Comprehensive GuideUTMEL29 August 20254036

Applications of FPGAs in Artificial Intelligence: A Comprehensive GuideUTMEL29 August 20254036This comprehensive guide explores FPGAs as powerful AI accelerators that offer distinct advantages over traditional GPUs and CPUs. FPGAs provide reconfigurable hardware that can be customized for specific AI workloads, delivering superior energy efficiency, ultra-low latency, and deterministic performance—particularly valuable for edge AI applications. While GPUs excel at parallel processing for training, FPGAs shine in inference tasks through their adaptability and power optimization. The document covers practical implementation challenges, including development complexity and resource constraints, while highlighting solutions like High-Level Synthesis tools and vendor-specific AI development suites from Intel and AMD/Xilinx. Real-world applications span telecommunications, healthcare, autonomous vehicles, and financial services, demonstrating FPGAs' versatility in mission-critical systems requiring real-time processing and minimal power consumption.

Read More 800G Optical Transceivers: The Guide for AI Data CentersUTMEL24 December 20255355

800G Optical Transceivers: The Guide for AI Data CentersUTMEL24 December 20255355The complete guide to 800G Optical Transceiver standards (QSFP-DD vs. OSFP). Overcome supply shortages and scale your AI data center with Utmel Electronic.

Read More The 2026 Engineer’s Guide: Choosing the Right MCU for Your Next IoT & New Energy ProjectUTMEL30 April 2026263

The 2026 Engineer’s Guide: Choosing the Right MCU for Your Next IoT & New Energy ProjectUTMEL30 April 2026263A comprehensive comparison of 2026's leading MCUs from ST, NXP, and Microchip across power efficiency, processing performance, connectivity, and ecosystems to help engineers select the optimal chip for next-gen IoT and new energy projects.

Read More

Subscribe to Utmel !

![FODM3053-NF098]() FODM3053-NF098

FODM3053-NF098ON Semiconductor

![FM31L278-G]() FM31L278-G

FM31L278-GCypress Semiconductor Corp

![ATSHA204A-RBHCZ-B]() ATSHA204A-RBHCZ-B

ATSHA204A-RBHCZ-BMicrochip Technology

![HCS362T-I/SN]() HCS362T-I/SN

HCS362T-I/SNMicrochip Technology

![AT88SC0104CA-TH-T]() AT88SC0104CA-TH-T

AT88SC0104CA-TH-TMicrochip Technology

![ADATE304BBCZ]() ADATE304BBCZ

ADATE304BBCZAnalog Devices Inc.

![DLP3310FQM]() DLP3310FQM

DLP3310FQMTexas Instruments

![FM31256-G]() FM31256-G

FM31256-GCypress Semiconductor Corp

![AT88SC3216C-PU]() AT88SC3216C-PU

AT88SC3216C-PUMicrochip Technology

![AT88SA10HS-TSU-T]() AT88SA10HS-TSU-T

AT88SA10HS-TSU-TMicrochip Technology

Product

Product Brand

Brand Articles

Articles Tools

Tools